Imagine being in Austin in 1995 when Scrum was first introduced. Or sitting at Snowbird Ski Resort in 2001 when the Agile Manifesto was written. These weren’t just technical evolutions. They were philosophical rewirings.

They reframed how teams thought about work itself.

Cut waste. Deliver value early. Measure velocity. Improve efficiency continuously.

These frameworks didn’t just help teams work faster. They helped teams understand their productivity in ways that were previously invisible.

Today, we’re at a similar inflection point with AI.

Most organizations currently treat AI as a software subscription. You pay a monthly fee. You receive output. And you accept whatever usage and cost comes with it.

But this perspective leaves out critical questions:

- Why did usage spike this month?

- What workflows are inefficient and should be ditched?

- What architectural decisions increased cost?

- What is the true productivity we gained from AI?

As AI becomes embedded deeper into development workflows, this lack of visibility becomes a dangerous blind spot.

Agile didn’t transform software development simply because it introduced new processes. It transformed development because it introduced visibility. Teams could measure velocity. They could identify inefficiencies. They could improve deliberately.

AI is now at the same turning point.

But unlike human teams, most organizations still lack clear visibility into how AI performs work, how efficiently it operates, and why costs rise or fall.

To reach its Agile moment, AI needs a measurable unit of work.

That unit is tokens.

Unlocking the black box of token analytics

If your design, development, and product teams are asked, “Why are we paying $X for AI?” — what data can you point to in order to evaluate productivity?

The answer, ultimately, is tokens.

Tokens determine both how much AI costs and how much work it performs. But in practice, most teams don’t have consistent visibility into token usage itself. They typically see the bill. They see the total cost. But they lack the deeper context needed to understand why those tokens were used, which workflows consumed them, or whether they were used efficiently.

This makes AI usage functionally opaque.

Leadership isn’t just asking what AI costs. They’re asking whether that cost reflects meaningful productivity—or avoidable inefficiency.

And without visibility into token usage, most teams simply don’t have a clear answer.

To understand how to bring visibility to AI, we first need to understand the unit that underpins all AI work.

Token 101

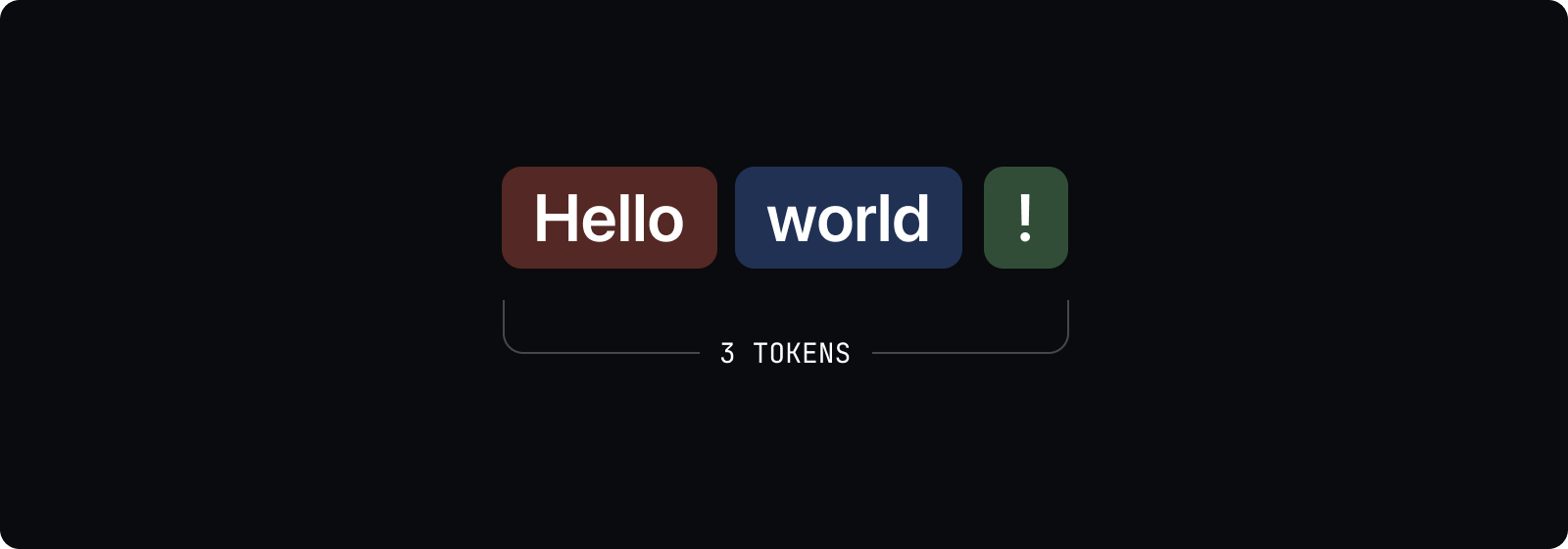

Before going further, it’s important to define what a token actually is.

Tokens are the basic unit of input and output used by AI models. Every instruction, piece of context, and generated response is broken into tokens.

Tokens don’t map perfectly to words. Some words are one token, others are multiple. As a rough approximation, one token often represents a few characters or about three-quarters of a word in English.

What matters most is this: tokens determine both cost and workload.

Every token processed requires compute. More tokens means more work performed by the model—and more cost incurred.

In practical terms, tokens are how AI work is measured, and how AI usage is billed.

In this sense, tokens function as the atomic unit of AI labor.

Reframing our view of tokens

Tokens give us something the AI ecosystem has historically lacked: a measurable unit of work.

Agile transformed software development by introducing measurable units like story points and velocity. These gave teams the ability to evaluate productivity, identify inefficiencies, and improve deliberately over time.

Tokens serve a similar role for AI.

They allow teams to measure how much work AI performs, how efficiently that work is completed, and how architectural or workflow decisions influence cost and performance.

This reframes AI entirely.

Instead of viewing AI as a flat subscription expense, it becomes a form of digital labor—one that can be measured, optimized, and improved.

The hidden costs of inefficient token usage

When teams lack visibility into token usage, two major downsides emerge.

The first is financial: avoidable inefficiency.

Without understanding what drives token consumption, teams can unknowingly adopt workflows that are far more expensive than necessary. The same outcome can often be achieved using dramatically fewer tokens through better context structuring, retrieval strategies, or architectural decisions.

This isn’t theoretical. Small inefficiencies compound rapidly when AI is used across dozens of engineers, hundreds of workflows, and thousands of daily interactions.

The second downside is a bit more hidden: inconsistent reliability.

It may seem intuitive that giving AI more context will improve its performance. But in practice, excessive and inefficient context can introduce noise, increase ambiguity, and reduce output consistency dramatically.

This phenomenon, sometimes referred to as context bloat, occurs when models must sift through large amounts of irrelevant or redundant information to complete a task. The result can be slower responses, higher token usage, and increased likelihood of drift or inconsistent execution.

Put plainly, more tokens don’t produce better outcomes. In many cases, they simply produce more noise.

Understanding when and why tokens are used is essential not just for cost control, but for maintaining quality and reliability.

Takeaways

Traditional AI development workflows often repeatedly expose large volumes of instructions, schemas, and tool definitions to the model through orchestration layers. Much of this context isn’t needed for the task at hand, but still consumes tokens and increases cost.

Context optimization layers like FlyDocs help address this inefficiency by introducing structure to how context is delivered.

Instead of sending the full universe of instructions, schemas, and tools with every request, FlyDocs ensures models only receive the information necessary for the task at hand.

This makes leading models like those from OpenAI and Anthropic more efficient—not by changing the models themselves, but by improving how they are used.

The result is lower token consumption, more predictable performance, and clearer visibility into how AI performs work.

Less unnecessary context. Fewer tokens consumed. More predictable performance.

The black box era is ending, and the Agile moment is upon us

The goal of token analytics efficiency isn’t simply to shave pennies. It’s to provide clarity.

- Clarity into AI productivity.

- Clarity into operational efficiency.

- Clarity into cost forecasting and ROI.

As AI becomes embedded deeper into product development, leaders need the ability to answer fundamental questions:

- How much work is AI actually doing?

- How efficiently is it operating?

- What will it cost to scale?

- Where are inefficiencies hiding?

Today, most organizations can’t answer these questions. They’re investing heavily in AI without the ability to measure its performance.

That lack of visibility won’t cut it for much longer.

At strideUX, in partnership with Plastr, we’ve been actively building and using FlyDocs to bring structure and visibility to how AI performs work.

The goal is to help teams structure context intentionally, reduce unnecessary token consumption, and introduce measurable visibility into spec driven development.

It doesn’t replace existing models. It helps teams use them more efficiently, predictably, and transparently.

Because the future of AI adoption won’t be defined by access to models alone.

It will be defined by understanding how they work, how efficiently they operate, and how much value they actually deliver.

The black box is beginning to open.

AI’s Agile moment isn’t theoretical. It’s already beginning.